Author: Cameron Mickell

A common question for novice planners is which RF propagation model is best for my technology?

We have many different users employing diverse technologies, time constraints and accuracy requirements, so it is not always a quick answer but knowing about the key types of models and where to use them makes a big difference to accuracy. There isn’t a one size fits all approach to model selection for radio planning but there are definitely good defaults…

TL;DR We now recommend ITU-R P.1812 as a default model.

To help answer this question in detail, we’ve decided to explain a little about each propagation model, describe some relevant use cases and then conduct a series of measurable experiments to compare model performance and offer practical recommendations for users who need a clear starting point so they can hit the ground running with their radio planning. In this blog, we will look at model types, when to use them and how to make an educated decision on which model to use for your radio project.

Communications Technologies across the EM spectrum

First it is important to understand there are vastly different use cases for radio technologies across the electromagnetic spectrum. Each of these technologies have their own spectrum requirements, frequency, bandwidth and power limits which have a strong influence over any potential coverage or point to point link. However, more impactful than this, is the environment and the varied ways in which they interact with radio signals.

Terrain, buildings and vegetation all interact differently with radio waves of varying frequency and different propagation models attempt to capture these behaviours in different ways. Older (1960) models pre-date developments in high resolution data so while they may adapt well to situations like their intended use, like downtown Tokyo in the case of Okumura-Hata, they will under perform in other scenarios without adjustments.

Because of this complexity, choosing the right model depends not only on your radio system but also on the environment you’re operating in. Below is a quick overview of common technologies and where they sit in the spectrum. We will look at environment latter.Communications Technologies

VHF (30–300 MHz)

Use case: Wide area voice comms, typically extending to the radio horizon.

Propagation at VHF frequencies is highly effective over long distances due to strong diffraction, good performance over undulating terrain, and relatively low attenuation through vegetation. These characteristics make VHF particularly well-suited to wide area narrow band voice networks and maritime or land mobile radio.

VHF applications can cover both broadcast and two-way communications with the former having significantly bigger antennas mast and transmission power.

LoRa / LPWAN (433 MHz and 868 MHz EU, 915MHz US)

Use case: IoT devices, low power sensors, hobbyist networking

Propagation at these frequencies is general better through vegetation compared to higher frequencies, allowing the signals to penetrate foliage with relatively low attenuation leading to good overall range while supporting modest data rates that are well suited to low power IoT telemetry applications.

L/S Band (1–2 GHz / 2–4 GHz)

Rough equivalent: Wi-Fi, Broadcasting, Tactical radios, Microwave links

Use case: IP based networking, voice, short to medium range data links

These frequencies typically support distances up to several kilometers, depending on antenna height, power, and environmental clutter. Propagation in this range is sensitive to buildings and clutter, which limits range in dense areas but still provides reliable line-of-sight performance for short to medium distance networking. These frequencies support higher data rates than VHF or sub-GHz bands but at the expense of reduced penetration through walls and vegetation. These bands support higher data rate technologies such as Wi-Fi, video streaming or autonomous drones/robots.

LTE / 4G / 5G (700 MHz – 2.6 GHz)

Use case: Mobile phones, tablets, broadband services

Propagation in the LTE bands offers a balanced compromise between range and capacity, allowing signals to travel several kilometres in outdoor environments while still maintaining the bandwidth needed for modern mobile broadband services.

Lower frequency LTE bands propagate further and diffract more effectively over terrain, whereas higher frequency bands are more affected by clutter and require denser cell deployments. This is why the uplink from the low power handset uses the lower bands as it has less path loss.

Because of this, LTE cells can have very different performance characteristics around terrain and clutter which makes choosing the right propagation model important.

Across all these technologies, the environment is a key factor in determining how far or how well you can communicate. Propagation models attempt to quantify just how much the environment is going to affect the behaviour of a signal to help engineers build out these complex communications systems.

How do Propagation Models work?

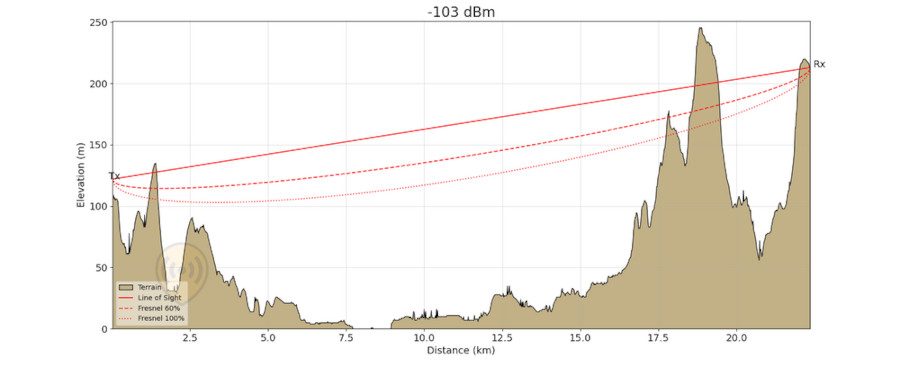

Radio Propagation Models provide mathematical formulas to give predictions for the behaviour of radio waves between two points. Typically, each model aims to estimate the path loss along a link. Through recursive testing of adjacent points, a wide area can be studied to produce a signal map.

Prediction of path loss is necessary for radio engineers and operators to create accurate link budgets and generate functional communication systems or sensors. Across all models, there are two principles:

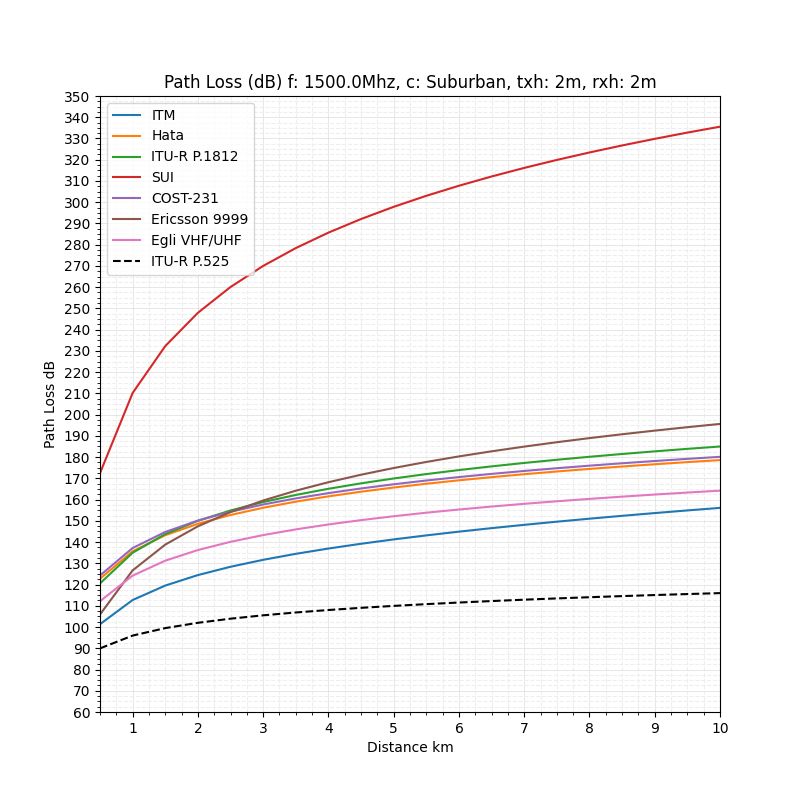

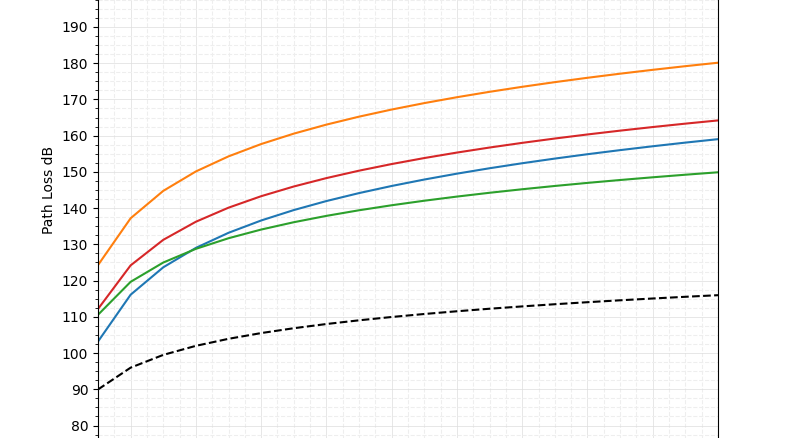

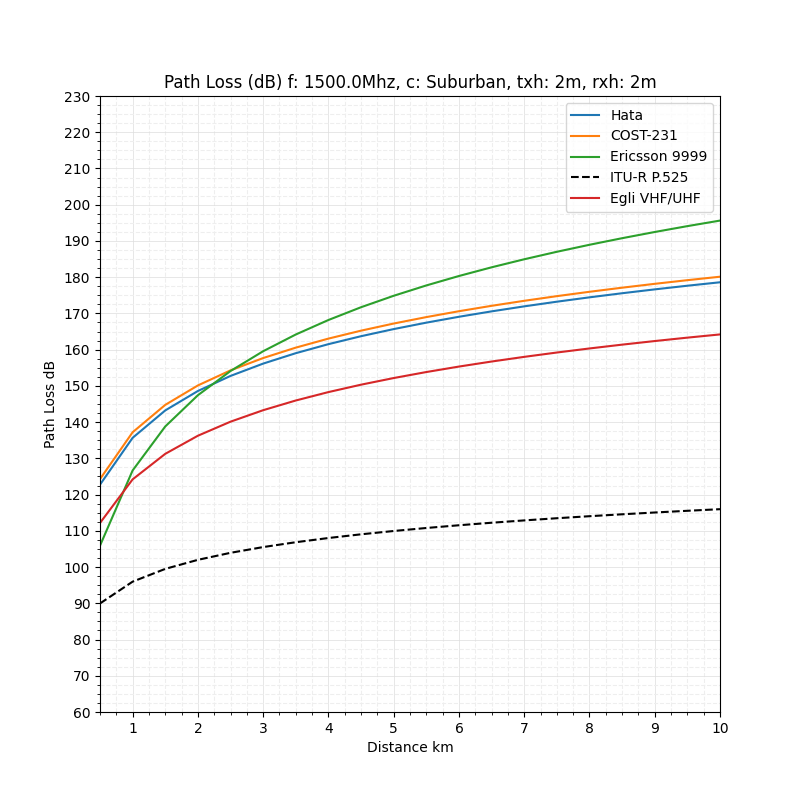

The common principle of free space loss is that path loss increases with both distance and frequency. The plotted curves below demonstrate this well.

The next principle is that each model has a unique path loss for an identical link. These curves are representative of an ideal test case of transmitting to a receiver with line of sight across uniform terrain.

We can see different models give different results before budgeting for other sources of variation. In order to understand why this occurs we need to look at some of the key features of a model so we know when to select each one and how they work to use them effectively.

Parts of a propagation model

Each model is essentially an attempt to solve a planning problem for a communications problem. Sometimes these are very generic problems and others are tied to a specific technology and frequency range. This gives them very different reasons for existing. Bear in mind that some pre-date consumer computing! That leads researchers past and present to look for practical solutions which can come from theory or from practice to solve a wide range of communications research problems. This has led to two main types of radio propagation models: These are deterministic and empirical models.

Deterministic Models

Deterministic models are formulas which take input variables and consistently produce the same output as to opposed to “stochastic” models which are probabilistic. Researchers derive deterministic models from first principles and other phenomena to give the best possible representation of radio wave behaviour for a given set of assumptions and inputs. Both inputs and assumptions vary from model to model due to the complexity and motivation for the model.

For planners, this means the model always treats input factors consistently. It means that accurate inputs will lead to a high degree of accuracy in the output. The opposite, stochastic models, are more commonly used in fields like finance or weather modelling where there is uncertainty around a given input or future conditions.

Empirical Models

Empirical Models are data driven,built from survey data which is refined to produce a prediction of wave behaviour built on the prior observations. The advantage of these models is that they can act as ‘black‑box’ predictors that do not require describing the internal physics of the system yet still producing outputs that fit observed conditions.

The risk of using an empirical model is if it was made from tower data in a Japanese city and you use it with handheld radios in a desert, it will not perform well at all.

Input Parameters

For both types of models, there are assumed input parameters that planners need to choose for a model to be applicable for their use case. For users, it is often unclear what each setting controls or how to choose an appropriate context

While propagation models provide the mathematical basis for predicting radio performance, their accuracy is ultimately constrained by the quality of the environmental data fed into them.

Even the most sophisticated model cannot compensate for incomplete or low‑resolution terrain and clutter inputs. This makes environmental data one of the biggest contributory factors in successful RF planning.

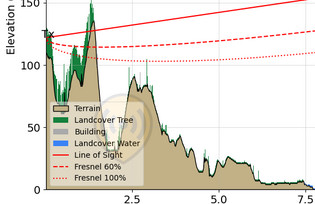

Terrain Data

Terrain refers to the physical shape of the earth such as hills, valleys, ridges and slopes. These features directly affect radio propagation through shadowing, diffraction, and reflection. Planning tools represent terrain using tiles sized according to their chosen resolution. In CloudRF, the resolution can be adjusted from the Output section, with higher resolution leading to larger compute times, bigger output files and more accurate representation of the world.

So, when to use a certain resolution? In CloudRF there are resolutions from 1m to 300m, however key thresholds to note are 2m, 10m and 30m which map to our source data.

- 30m global datasets. Suitable for coarse planning or large areas. Limited detail often causes over‑optimistic coverage in built‑up or rugged environments. CloudRF is preloaded with 30m DSM coverage for most of the globe up to 60N with additional high latitude data for Scandinavia and Alaska.

- 10m national datasets and space based land cover (trees etc). The balance between performance and accuracy for tactical and commercial use. Well suited for coverage maps with radii up to 10s of kilometres.

- 2m LiDAR. Highly accurate and excellent for urban, industrial or complex terrain analysis. Particularly beneficial for UHF deployments in cities or complex industrial/agricultural sites. Because most propagation issues occur when line‑of‑sight is obstructed, a high terrain resolution gives a close fit to the real environment.

Clutter Data and Contexts

Clutter describes man‑made or natural surface features that are above the terrain dataset—buildings, trees, industrial areas, bodies of water, or open ground. Different wavelengths interact with clutter in mostly predictable ways:

VHF and lower tend to penetrate vegetation more effectively but are still attenuated by dense structures. UHF, LTE and Wi‑Fi suffer greater attenuation from foliage and urban environments. LoRa and LPWAN rely heavily on clutter accuracy for predicting street‑level performance.

Within CloudRF, clutter is represented as classification layers with associated nominal heights and attenuation values. Selecting the correct clutter model ensures that urban and rural areas are treated appropriately, since the losses applied can vary dramatically between tree canopy, suburban housing, or high‑rise commercial zones. This allows for clutter tuning which can help with fitting survey/calibration data to a prediction.

Instead of clutter, empirical models (Okumura-Hata, COST 231 and Ericsson 9999) use contexts as factors to help tune their attenuation to an environment. These contexts are fixed empirical curves intended to represent the average path loss for a typical environment. These contexts are urban, mixed (suburban) and unobstructed (open ground). Because of this they will often not be terrain aware and in our experience do not adapt well to real clutter. The graphs below show how contexts can vary pathloss in ways that aren’t always intuitive.

Now we know what kinds of inputs our models are expecting, it is worth understanding the differences with the models available on CloudRF.

Model Bios

Irregular Terrain Model (Longley-Rice)

The Longley-Rice model is an old but trusty general-purpose model developed to meet the needs of television broadcasting during the 1960s. As such, its input parameters have a focus on longer range high-low use cases. The model is named for its ability to account for terrain variations along the signal path. Naturally, ITM requires quality terrain data to achieve best performance. It can be used from 20MHz to 20GHz and has a range of 1-2000km for Antennas 0.5m to 3km in height.

ITU–R P.1812

The P.1812 model covers VHF and UHF bands and is recommended by the ITU since 2007 for terrestrial point‑to‑area services. The model incorporates Bullington multi-obstacle diffraction and is effective from 30 MHz to 3 GHz, making it well suited for modern commercial wireless technologies. Like the ITM, it factors in changes in terrain and incorporates clutter data into its calculations allowing it to perform very well when supplied with high quality terrain and clutter data.

General Purpose

The General-Purpose model on CloudRF is the ITU-R P.525-2 model with an additional 20dB of attenuation. The P.525-2 model is the ITU recommended free space attenuation model. It can be used across all RF frequencies from VHF up into 100GHz. With accurate clutter and land cover data, this model can be tuned to achieve single digit variation from field measurements in rural or suburban environments. It is well suited to signals where both ends of a link are at ground level, like portable radio networks. This is outside of the comfort zone of typical high-low cellular models.

Okumura-Hata

The Okumura-Hata model is used for path loss prediction in urban environments. It is empirically derived and suitable for use around urban environments.

It assumes that the transmitter is much higher than the receiver. Specifically, 30m- 200m transmitter and 1-10m receiver heights for 1-20km. The frequency range of the original model is 150MHz – 1.5 GHz. These assumptions and range make this model best suited for cellular or broadcast environments. It uses an environment context to set its attenuation.

COST 231-Hata

This model is a popular extension of the Okumura-Hata model which brings the upper frequency to 2 GHz. COST (COopération européenne dans le domaine de la recherche Scientifique et Technique) began the Action 231 project to address the need to accurately model 2G mobile systems like GSM around 1995-1999. It was based data collected from multiple European cities to tune the model for urban environments. Because of this, it is best used in 1500-2000MHz range where the user is looking to model dynamic urban environments where LOS is often obstructed. Like the Okumura-Hata, it uses environmental contexts to be tune its attenuation.

Ericsson 9999

Ericsson extended the Hata model to 1900 MHz with special attention to the 4G and LTE use case in urban environments. Like the COST and Hata models, it’s environmental parameters can be adjusted for account for different scenarios such as rural, suburban or urban environments.

Egli VHF/UHF

The Egli model was developed by John Egli based his research with the US Army Signal Corp Labs in the early 1940s. The old model is empirically derived by capturing real world path loss across irregular terrain with dispersed clutter such as trees, buildings and other structures. The model typically expects 30-300m tall base stations to a mobile station at 1.5-10m height. Egli is suitable for VHF and UHF high-low cases below 1.5GHz. Unlike other empirical models on this list, it doesn’t use environmental contexts, so it is best suited for open rural settings.

Model Bios Quick Reference Table

| Model | Frequency Range | Best Environments/Use | Terrain‑Aware? | Clutter or Context Use | Strengths |

| Irregular Terrain Model (Longley‑Rice) | 20 MHz – 20 GHz | Mixed terrain, rural, long‑range | Yes. Includes hybrid smooth earth diffraction | Use CloudRF Clutter profiles | Good for hilly/mountainous terrain; adaptable to many use cases |

| ITU‑R P.1812 | 30 MHz – 3 GHz | VHF/UHF area coverage, suburban–rural, mixed paths | Yes. Includes Delta Bullington Diffraction | Use CloudRF Clutter profiles | Excellent general‑purpose model; robust diffraction; needs accurate clutter |

| General Purpose | 1 MHz – 100 GHz | Simple LOS, open areas, clutter‑tuned scenarios | Yes (with clutter added) | Use CloudRF Clutter profiles | Easy to use; fully wideband; predictable behaviour; optimistic without clutter. |

| Okumura‑Hata | 150 MHz – 1.5 GHz | Urban Macro Cells | No | Urban/Suburban/Rural Contexts | Assumes high transmitter. Behaves poorly outside operating conditions. |

| COST‑231 Hata | 1.5 GHz – 2.0 GHz | Urban Macro Cells | No | Urban/Suburban/Rural Contexts | Well validated for cities; good for obstructed LOS macro networks |

| Ericsson 9999 | ~800 MHz – 1900 MHz | Urban Macro Cells (GSM/LTE) | No | Urban/Suburban/Rural Contexts | Flexible; Needs calibration measurements; good for early LTE/GSM |

| Egli VHF/UHF | < 1.5 GHz | Rural VHF/UHF | No | Nil | Useful for open rural coverage; good for broadcast-like paths; assumes tall base stations; |

Propagation Model Bake Off

To help us make an informed model choice, we will conduct a series of tests using real world measurements and comparing model performance to our measured data. From this we will be able to compare results across models and see well that work without diving into clutter tunning. This will lead us to the point where it is possible to make a clear recommendation on what propagation model to choose to start a project.

Defining accuracy

To grade a model, we need to understand what values indicate an accurate model. When collecting measurements from the real world, there is always a hardware measurement error. Expensive test equipment is expensive for a reason and conversely a cheap SDR is unusable for power measurements.

For our tests, we expect a measurement error around 3 dB which would represent absolute accuracy.

A score of 3 – 6 dB would indicate an excellent result, 6 – 9 dB is a good match and up to 12 dB is ok. A score higher than 12 dB would be considered an inaccurate model and/or measurements.

Both the statistical mean and the Root Mean Square (RMS) are compared. Achieving a low mean is easy enough through over fitting results but a low RMS is much harder in an urban environment as high resolution clutter must be tuned to match diverse coverage results.

We will look at 41.5MHz, 200MHz, 800MHz, 1800MHz and 2100MHz which give us a broad frequency range to test across.

VHF (41.5 & 200MHz)

VHF broadcasting is an old and difficult problem where the long range and varying terrain can disrupt line of sight to the receiver. Power ranges are significantly higher and the antennas are mounted on very tall radios.

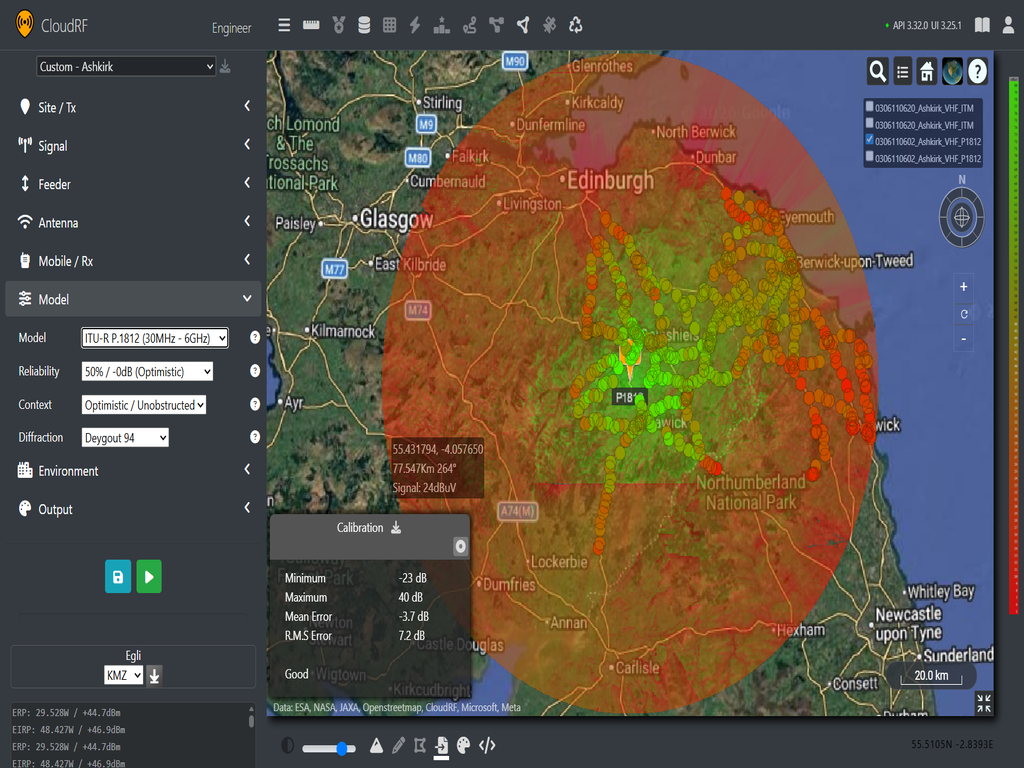

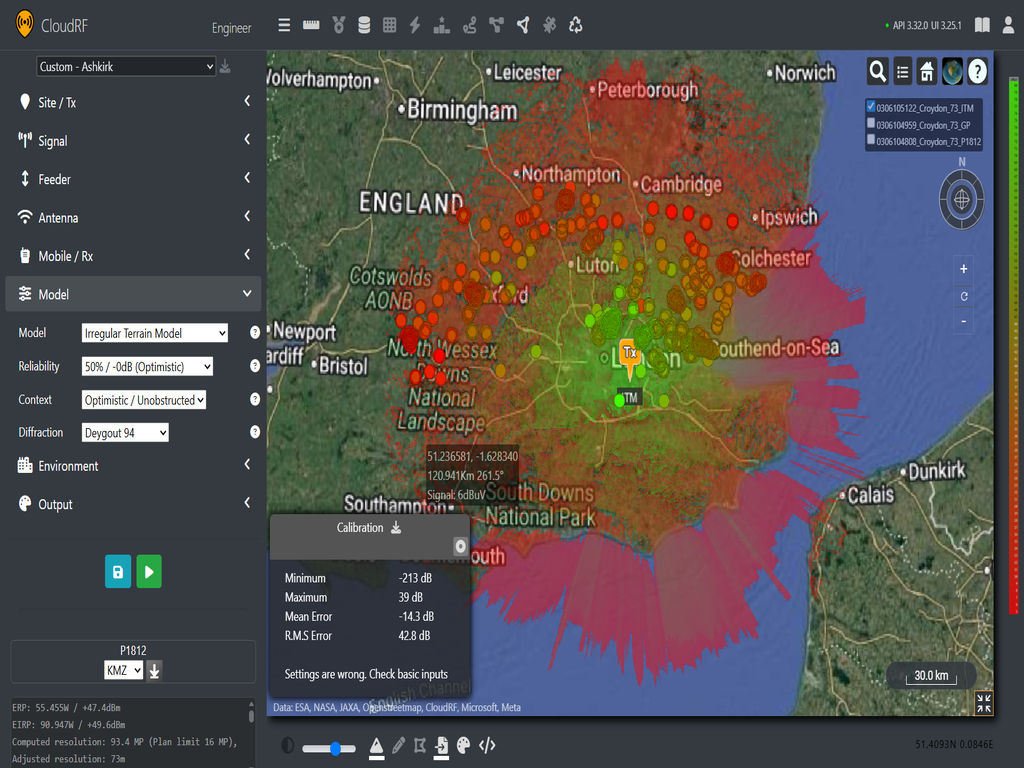

To test performance of models in the range, we referenced an ITU dataset collected by the ITU’s Study Group 3 which has a VHF broadcast data for various locations around UK, US and Europe. We will be using data from Ashkirk, Croydon and Emily Moor (41.5, 191.25, 196.25). Each area typically has over 1000 data points collected around the broadcast region, measured in field strength (dBμV). Using CloudRF, we can model expected field strength using a selection of models to see which best fits the data.

It should be noted that as this radius, terrain and clutter resolution is reduced on CloudRF due to commercial limits not present on a private server. However, as we have multiple large data sets, we can still be confident in our predictions if we see consistent performance results from case to case.

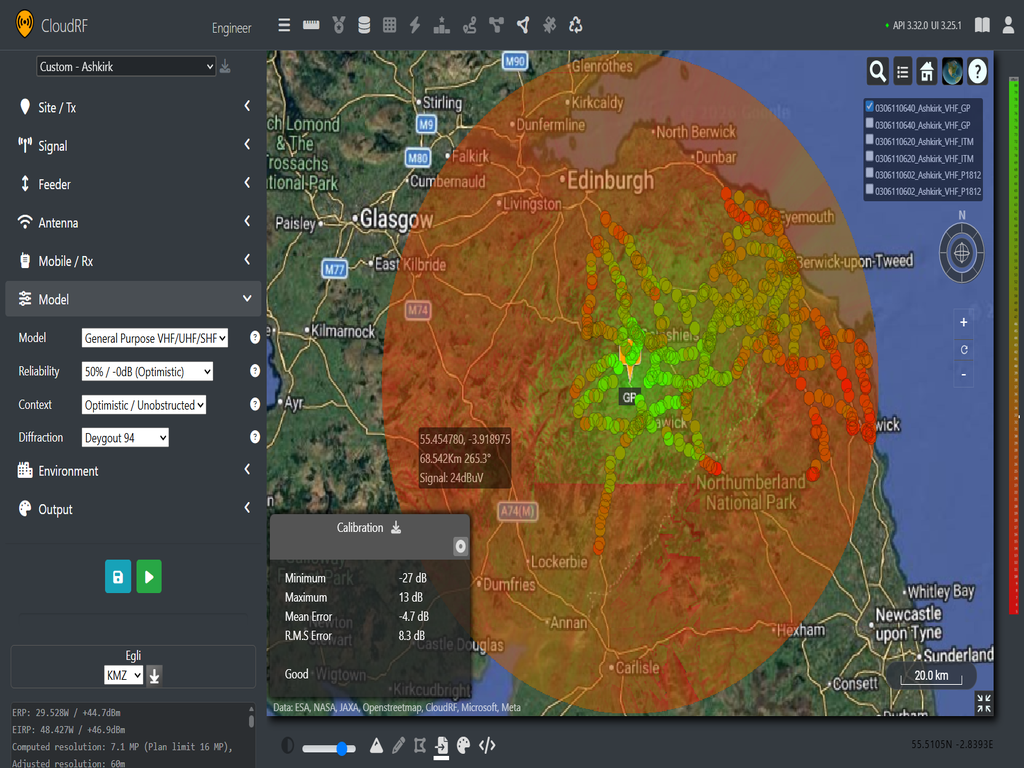

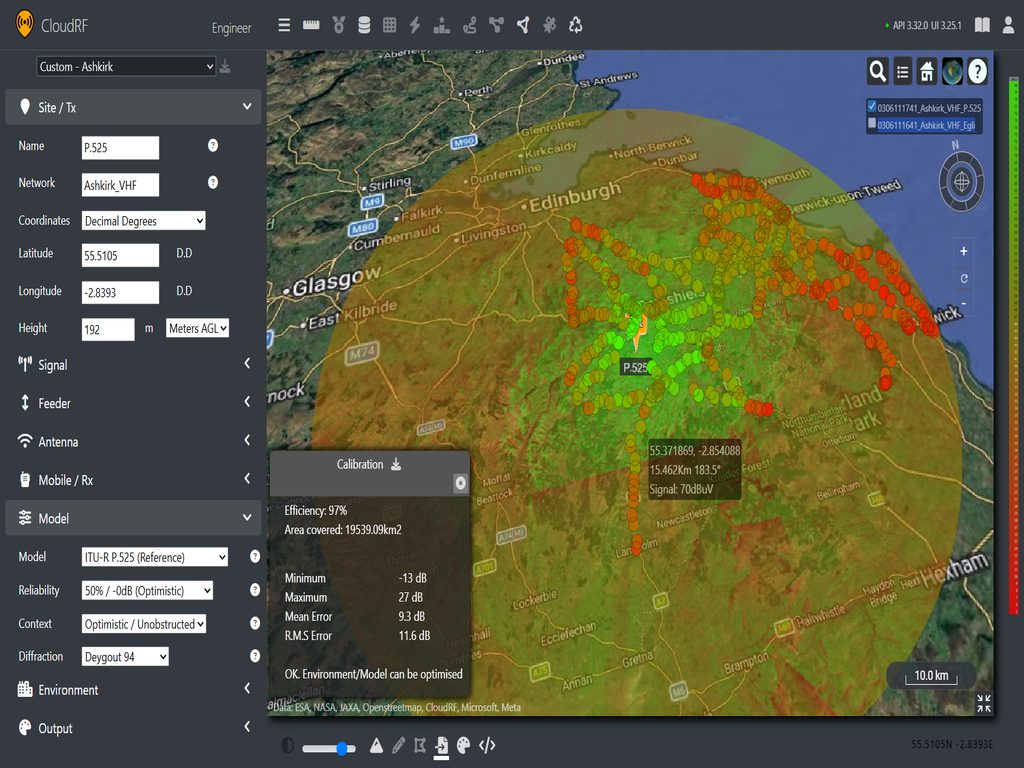

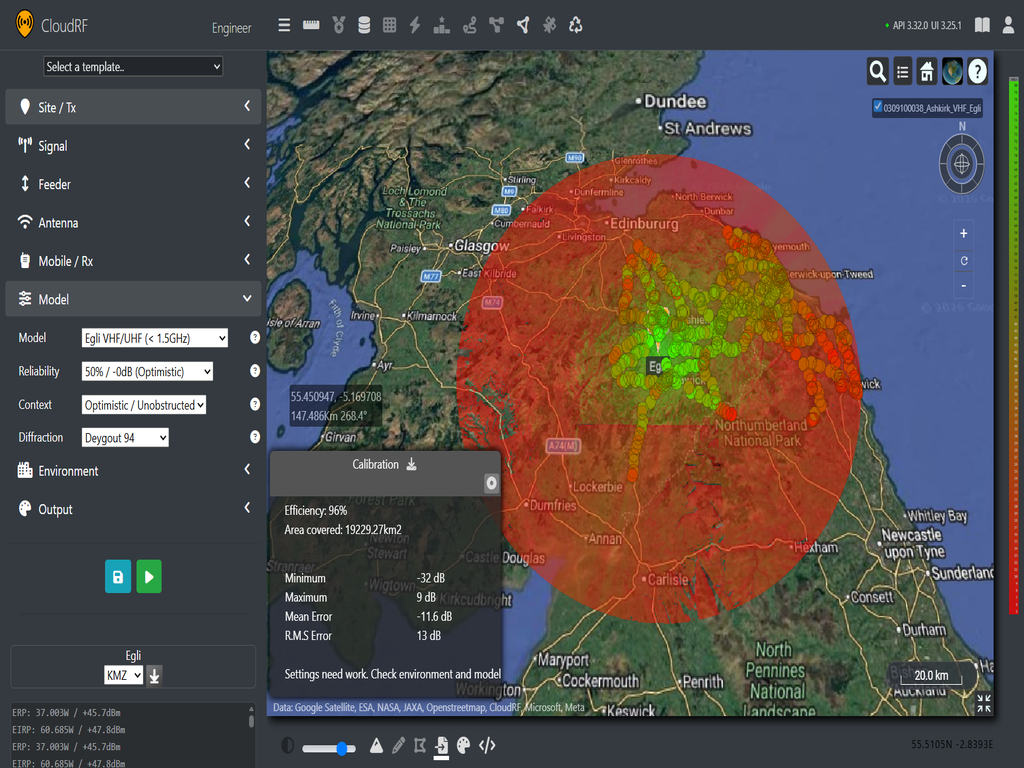

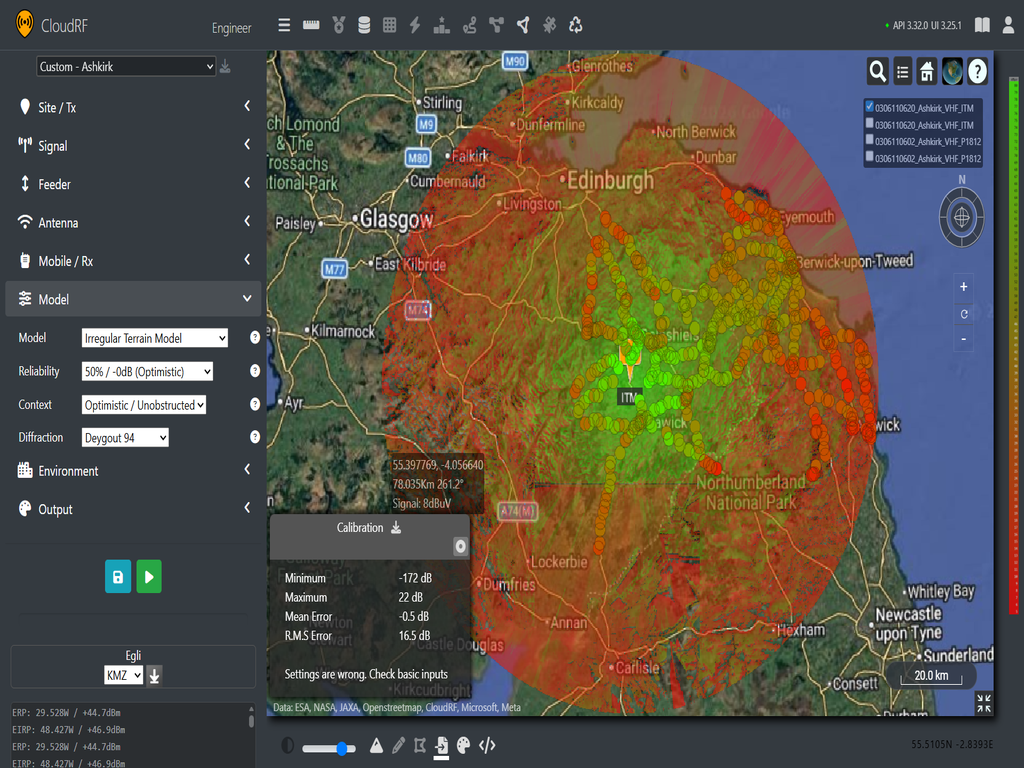

The first test involves a dataset collected from the Ashkirk broadcasting tower in Selkirkshire Scotland. The VHF antenna sits 192m above the ground, so it is very high compared to cellular or handheld radio use cases. The receive antenna is fixed at 4.3m, which will make it taller than most trees and clutter in the area. The data set contains 534 data points within an 80km radius of the tower.

Ashkirk (41.5MHz)

- ITU-R P1812 (Mean : -3.7 dB, RMS: 7.2 dB)

- General Purpose (Mean: -4.7 dB, RMS: 8.3 dB)

- ITU-R P.P525 (Mean : 9.3 dB, RMS: 11.6 dB)

- Egli (Mean: -11.6 dB, RMS: 13 dB)

- ITM(Mean: -0.5 dB, RMS: 16.5 dB)

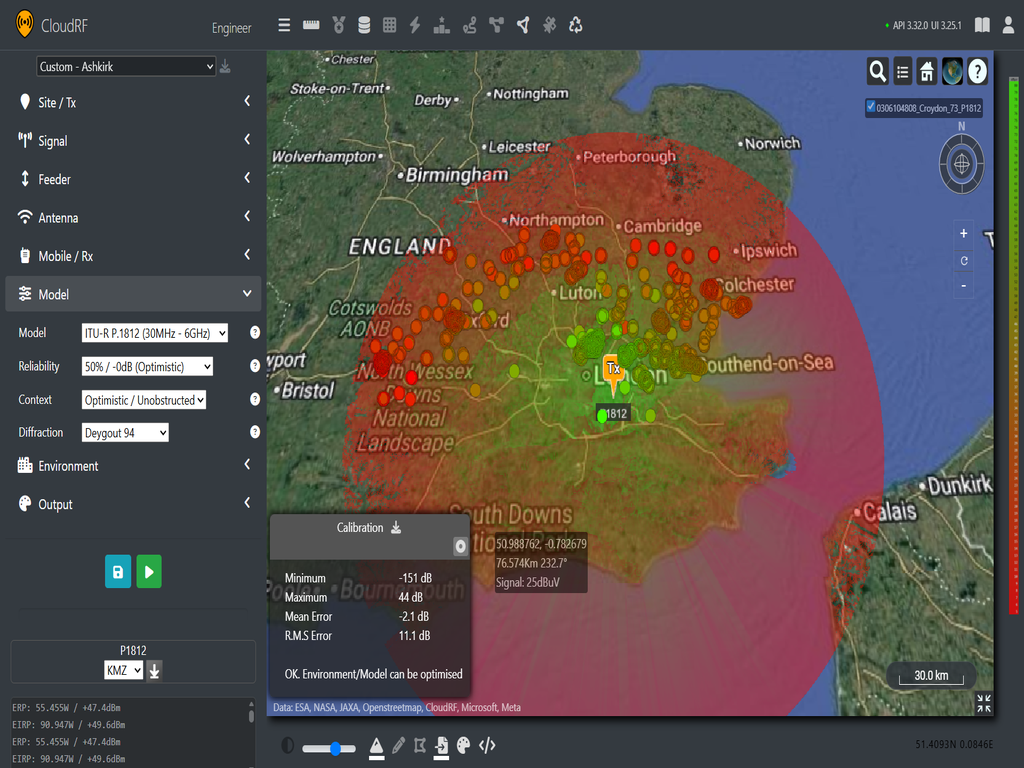

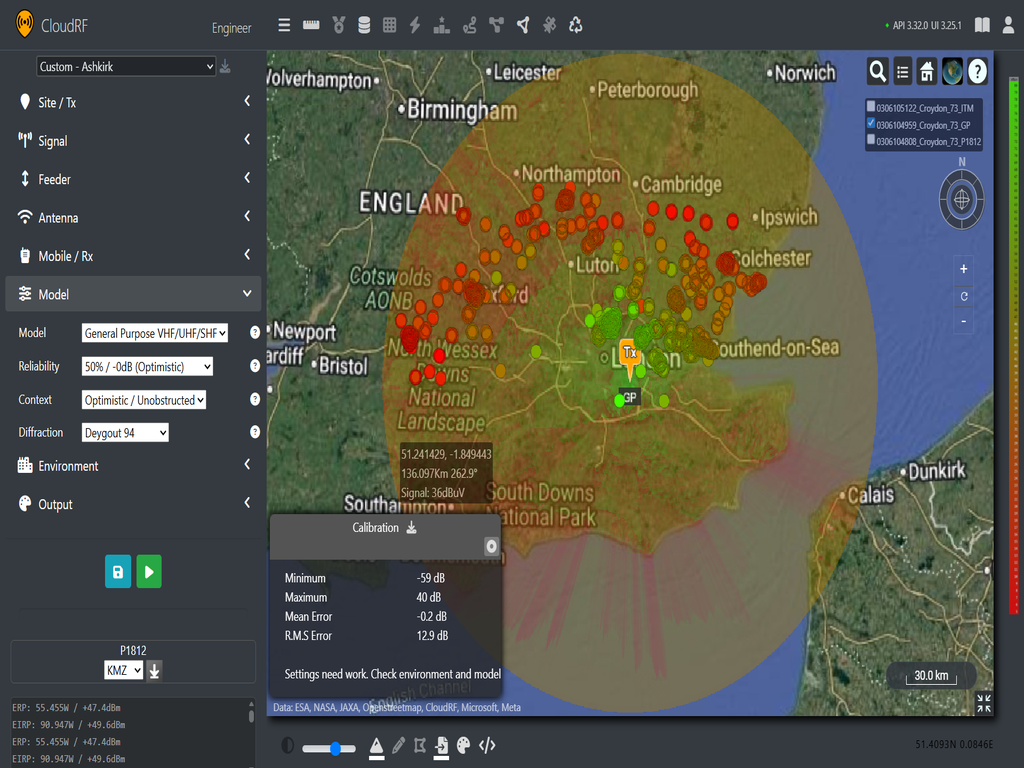

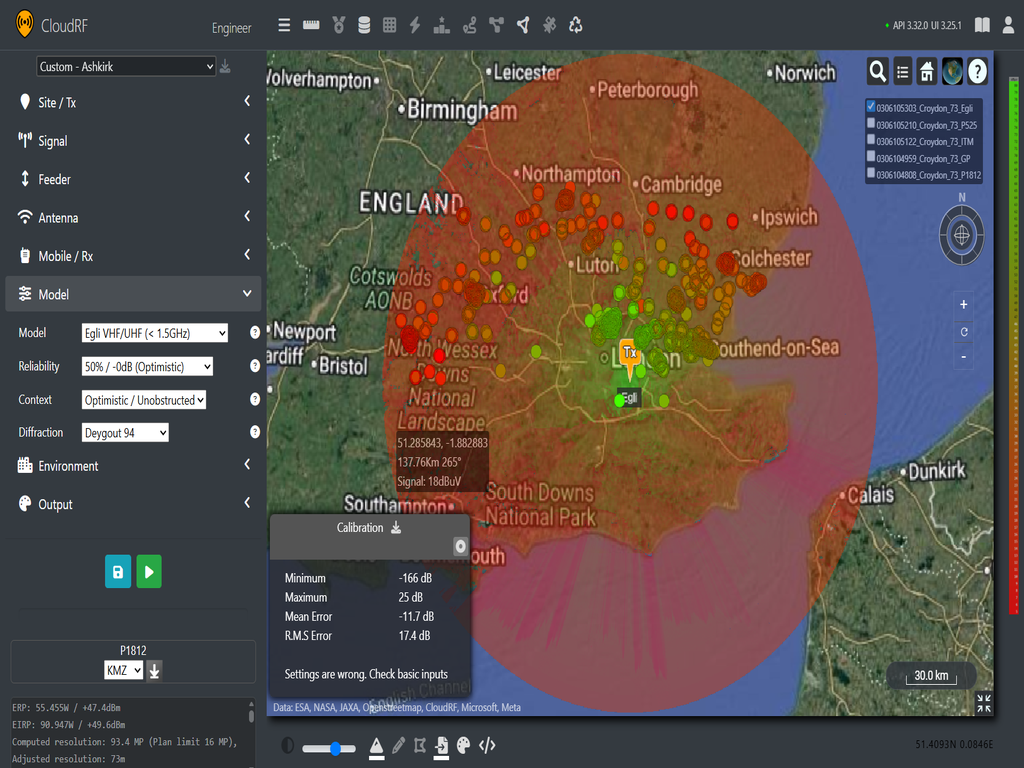

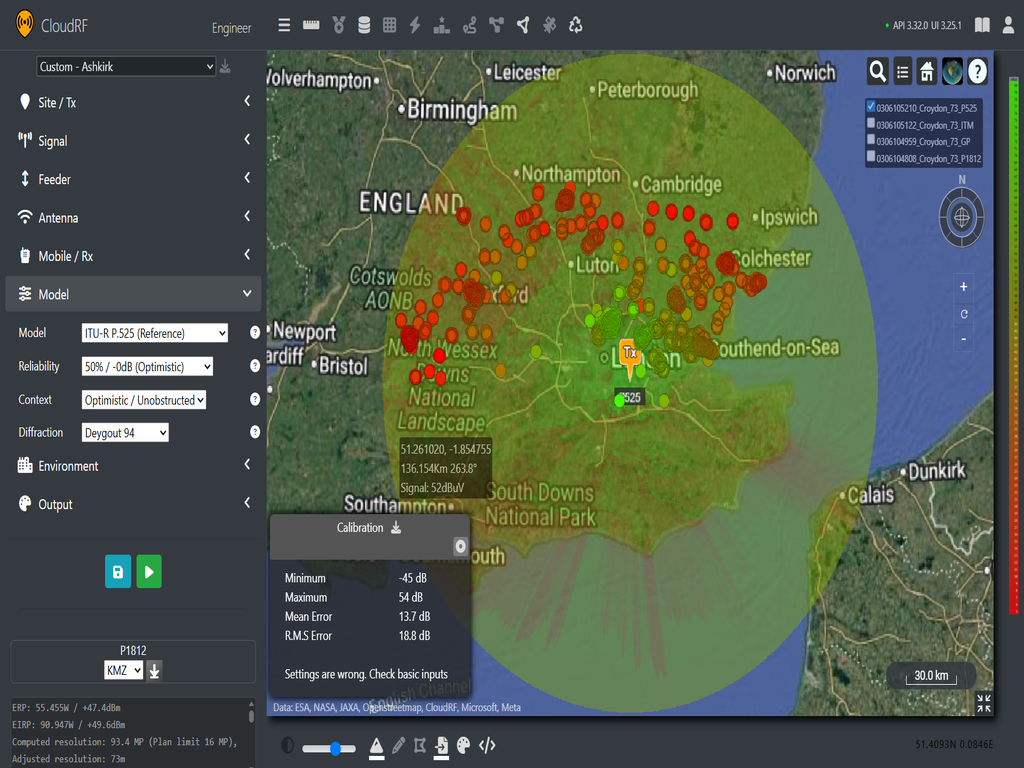

Croydon (191.25 MHz)

The second test involves a dataset collected from the Croydon transmitting station in Upper Norwood, London. The VHF antenna sits at 137m above the ground, so it is very high compared to usual use cases. The receive antenna sits at 9.8m, which places it well above most buildings and landcover expect for dense urban areas like London. The data set contains 2000 data points within a 145km radius of the tower.

- ITU-R P.1812 (Mean: -3.7 dB, RMS: 7.2 dB)

- General Purpose (Mean : -4.7 dB, RMS: 8.3 dB)

- Egli (Mean : -11.6 dB, RMS: 13 dB)

- ITU-R P.525 (Mean: 9.3 dB, RMS: 11.6 dB)

- Irregular Terrain Model (Mean error: -0.5 dB, RMS: 16.5 dB)

In this second test, we can see that our only acceptable prediction is P.1812 which will require further calibration to be tuned for this environment.

The third test uses data from the Emily Moor transmitter which broadcasts to the Yorkshire area. The data set contains 2000 points within a 100Km radius. The transmit height is 305m and receive height is 10m.

Emily Moor (196.25 MHz)

- ITU-R P.1812 (Mean: -2.5 dB, RMS: 8.3 dB)

- ITM (Mean: -1 dB, RMS: 10 dB)

- ITU-R P.525 (Mean: -5.2 dB, RMS: 11.1 dB)

- Egli (Mean: 11 dB, RMS: 14.5 dB)

- General Purpose (Mean: -14.8 dB, RMS: 17.7 dB)

From our third data set we can see that P.1812 gives the best prediction again for these conditions. The significant heights involved worked against the ground based GP model but favoured ITM, developed for TV broadcasting.

VHF Conclusion

From our testing, we can see that without calibration, the models produce variable results with the test data sets. However, the one consistent exception is ITU-R-P.1812 which gives a mean measurement error of -2.76 dB with an RMS of 8.8 dB. For this range and complex environment, this is a good result which can be improved further with clutter tuning.

We can also see that our mean and root mean square values are higher than the few dB we would expect in a cellular model eg. 6dB. This is acceptable in this case as we are working over a very large area where the standard deviation of our results will increase as our resolution expands. With a large amount of diverse data points, localised errors can be diluted to establish consistent performance across data sets.

Looking at our selection of models, it is not surprising to see P.1812 outperforming the rest. Egli is a 1950 empirical model for VHF broadcasting, however it is not terrain aware so will tend to under/over attenuate through irregular terrain. Free space (P.525) will tend to be over optimistic over long distances and the added attenuation for General Purpose is better suited for handheld radios amongst clutter. So naturally, for CloudRF uses, we’d recommend starting with ITU-R P.1812 when working with VHF.

800 MHz (LoRa, UHF, Cellular)

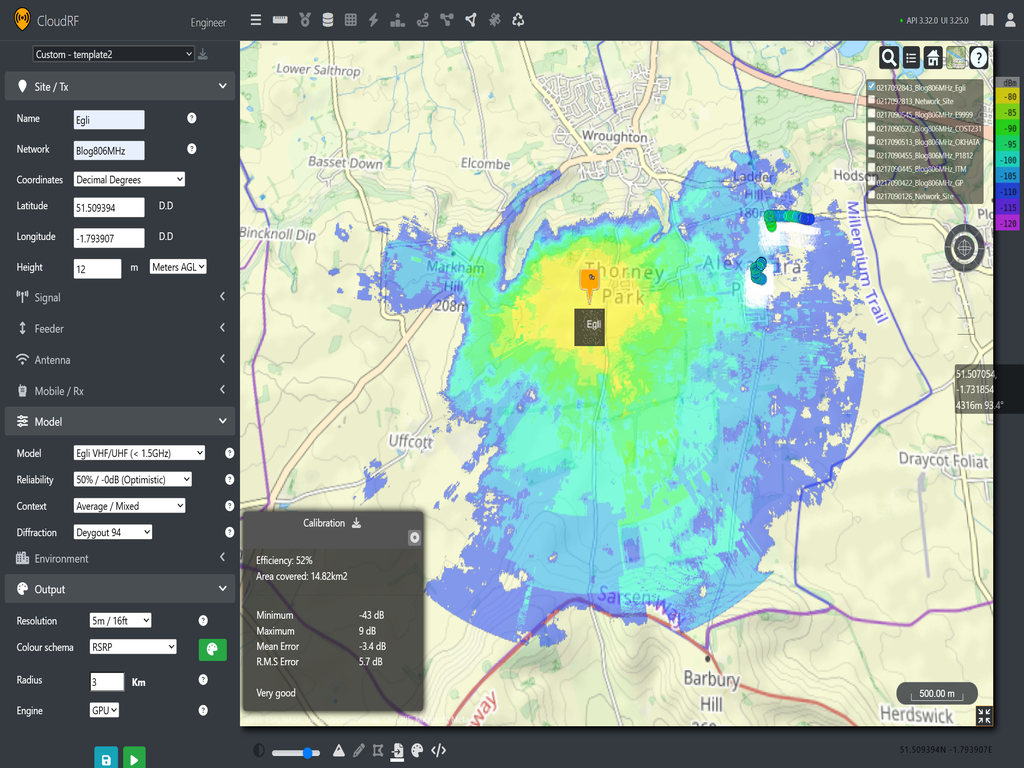

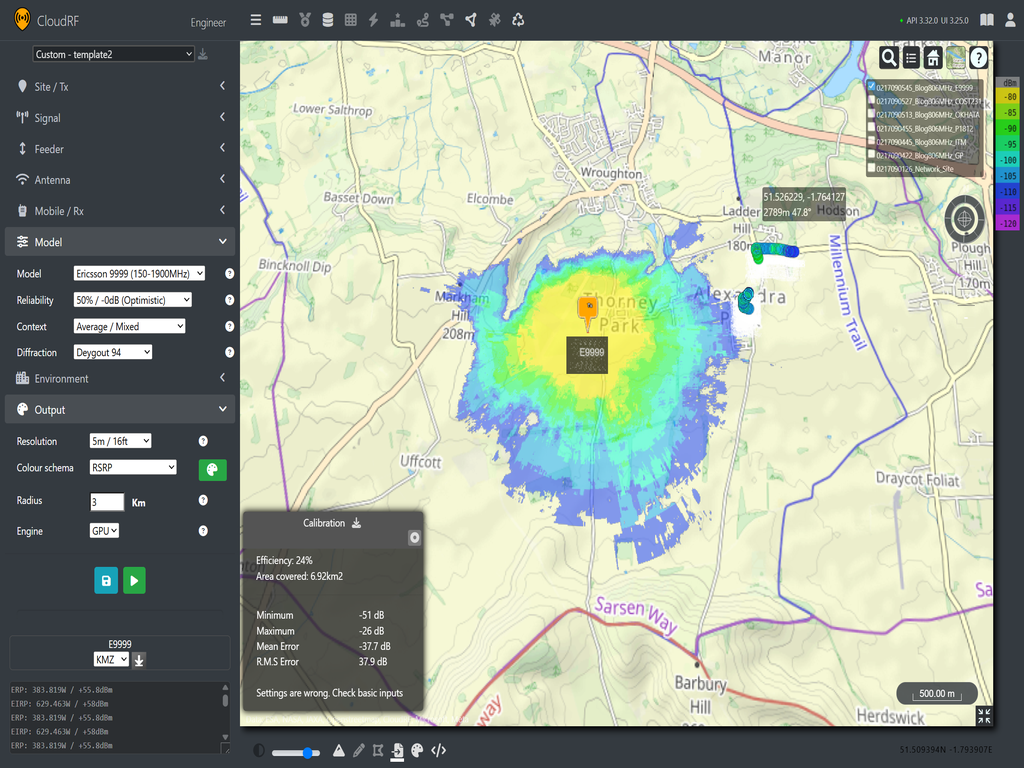

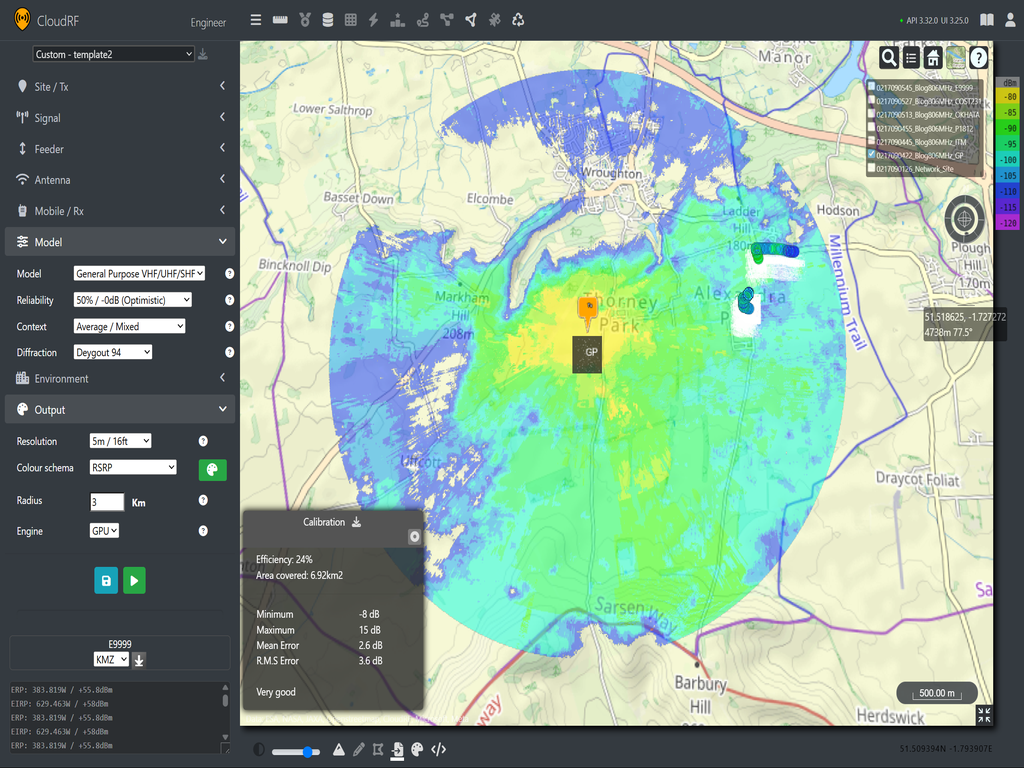

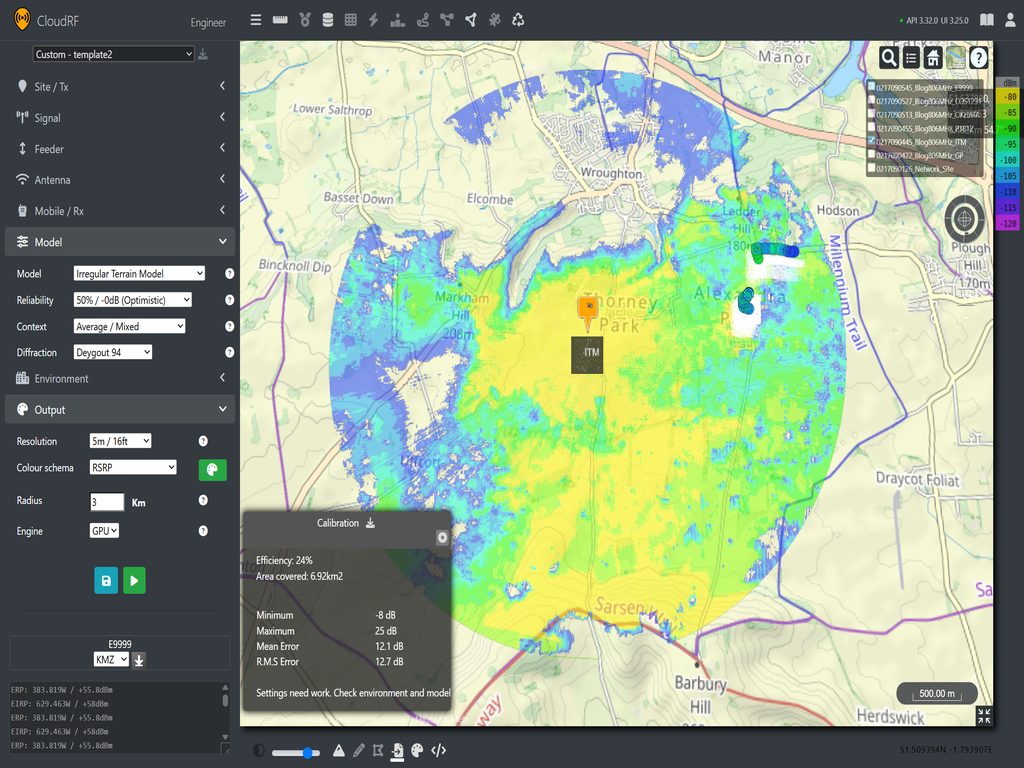

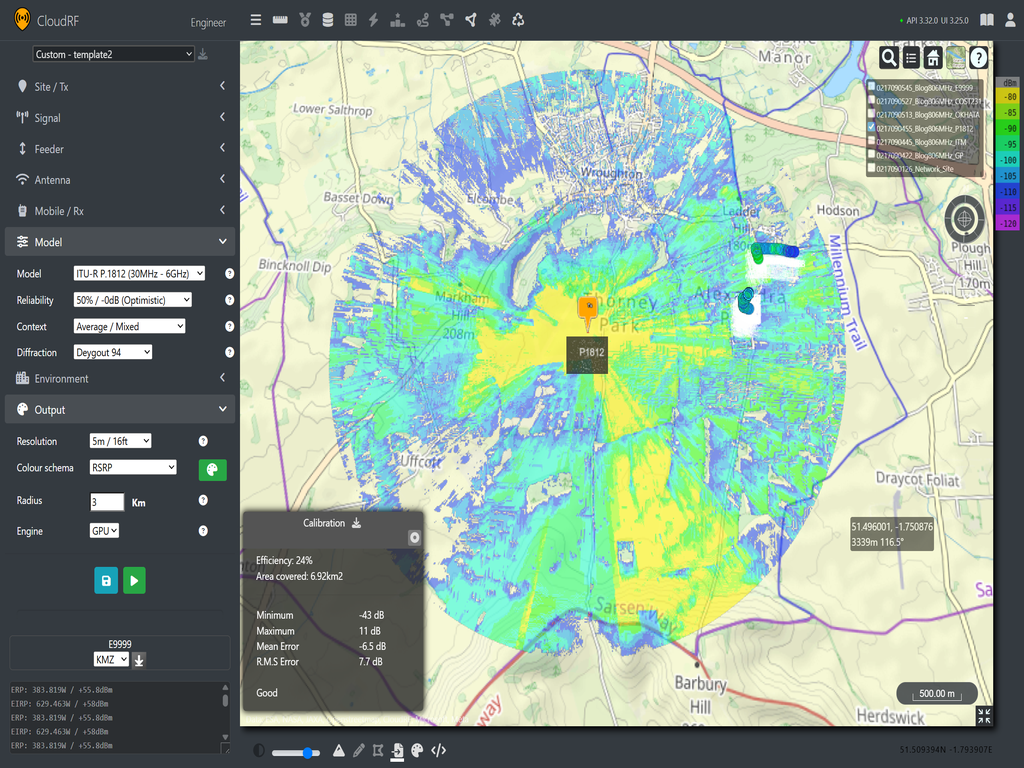

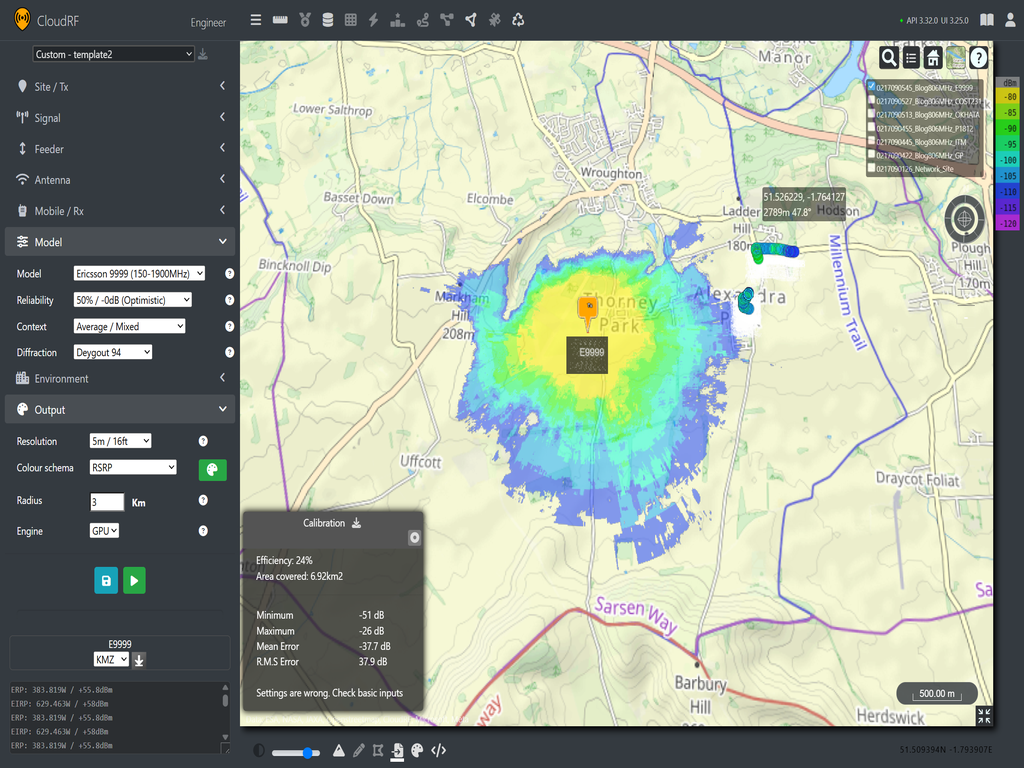

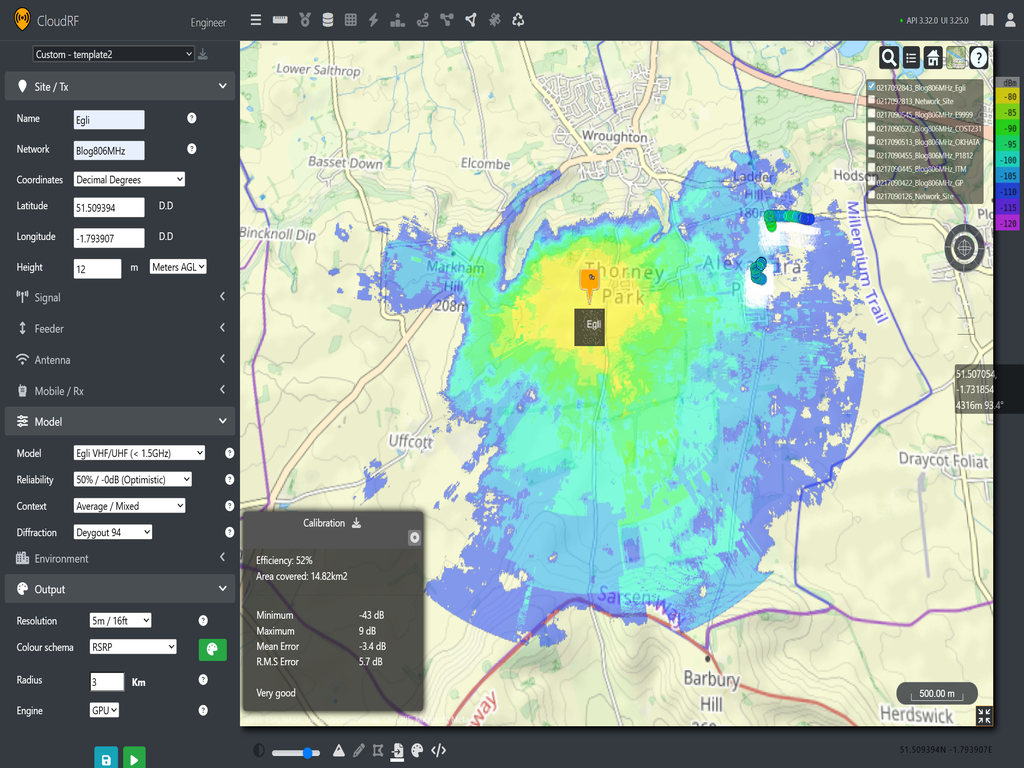

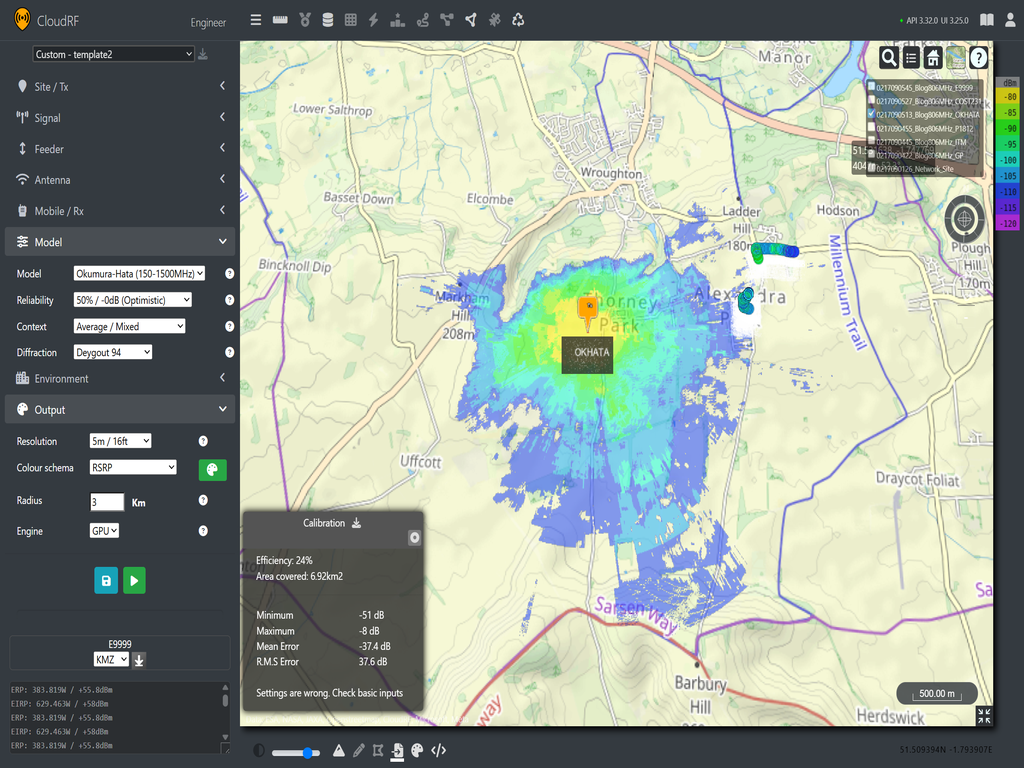

For this test, we will be using an LTE band 20 (806MHz) transmission tower with RSRP measurements taken from test handsets located with a 3 Km radius of the antenna. The Antenna itself is sitting at 12m above the ground. This serves as an excellent test of lower frequency LTE, 3G and LoRa (868MHz). Using field measurements, we will make predictions using Cloud RF and then use the calibration tool to see the average and RMS errors to see if we have a good fit between our model and our data.

The models we will test are: General Purpose, Irregular Terrain Model, ITU-R P.1812, Okuma-Hata, Ericsson 9999 and Egli. We won’t be testing COST 231 as the test data is below its intended frequency range of 1.5GHz -2GHz.

The area of interest is located to the south of the village of Wroughton, which is South of Swindon. The site sits in an open field surrounded by fields and a solar farm with good inter-visibility around the former airfield. The village of Wroughton sits to the north in the shadow of a hill, so we would expect to only see a little coverage through diffraction to the north with stronger coverage to the west, south and east being broken up by hedgerows and sparse buildings.

Wroughton (806 MHz)

- General Purpose (Mean : 2.6 dB, RMS: 3.6 dB)

- Egli (Mean : -3.4 dB, RMS: 5.7 dB)

- ITU-R P.1812 (Mean : -6.5 dB, RMS: 7.7 dB)

- Irregular Terrain Model (Mean : 12.1 dB, RMS: 12.7 dB)

- Okumura-Hata (Mean : -37.7 dB, RMS: 37.9 dB)

- Ericsson 9999 (Mean : -37.7 dB, RMS: 37.9 dB)

Results

From the test, we can see that General Purpose and ITU-R P.1812 are good fits for the data, offering single digit variance. The ITM prediction is under attenuating and giving stronger coverage over similar areas to the general purpose and ITU-R P.1812. We can also see that Okumura-Hata and Ericsson 9999 are over attenuating, and we aren’t seeing coverage in the area around our readings at all.

To understand these results, we can go back to their intended use cases: Okumura-Hata and Ericsson 9999 models are intended for built up urban environments and expect more obstacles and chances for diffraction. For the test template, we are using an average/mixed profile which maybe over attenuating our predictions without the environment providing enough paths for diffraction. If we look at the area of the test, we can see there is very few buildings with plenty of open fields and trees. If the context is adjusted to unobstructed, both Okumura-Hata and Ericsson 9999 should yield a better fit to our test data.

- Ericsson 9999 Unobstructed (Mean: 2.4 dB, RMS 3.5 dB)

- Okumura-Hata Unobstructed: (Mean : -6.8 dB, RMS: 7.6 dB)

By changing the context, we can see that both models now fit the data well.

UHF conclusion

CloudRF recommends ITU‑R P.1812 or General Purpose model for modelling the 800 MHz range. Our experiment supports this, demonstrating that both models provide reliable results when paired with quality clutter and land cover data.

As this test shows, empirical models such as Okumura‑Hata and Ericsson 9999 can be difficult to use without reference data because they depend heavily on selecting the correct environmental context. Without field measurements, you must rely on your interpretation of the environment to decide whether a model should be treated as urban, suburban, rural, or unobstructed. This requires time, experience, and careful reading of the model documentation especially when planning in remote or complex areas.

Deterministic models, on the other hand, have shown to perform consistently when supplied with good‑quality terrain and clutter data. As we continue conducting field tests, we are becoming increasingly confident in recommending ITU‑R P.1812 as a robust starting point for modelling LTE Band 20 (800 MHz) and similar low‑frequency systems. Because it is terrain aware and It offers good accuracy even before calibration, which makes them highly useful for time sensitive planning tasks. Additionally, as better LiDAR and DTM data becomes available, these models will increase in effectiveness as legacy empirical models become obsolete.

Snow covered trees

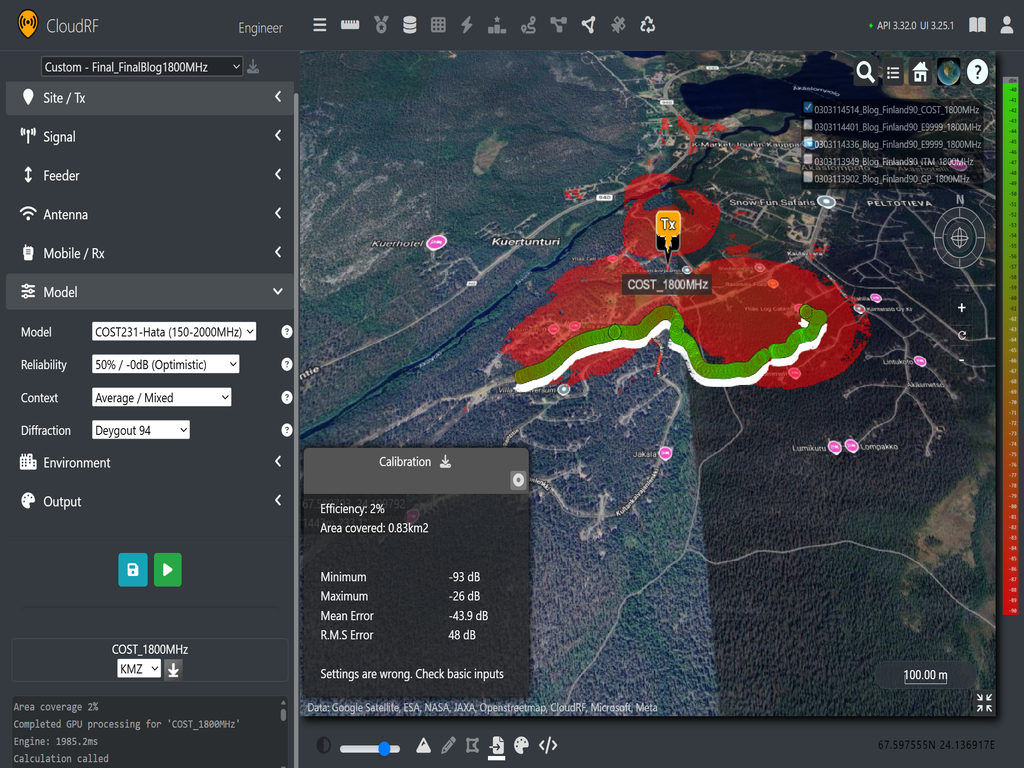

Taking the test up a gear to the Arctic circle, we collected LTE survey data using the RantCell survey app from the top of Finland across multiple bands to investigate the accuracy impact of thick snow on trees.

Snow is a lattice of water which reflects and attenuates RF so is challenging to simulate, especially as it changes!

The field data collected gives us RSRP (Reference Signal Received Power) from two LTE bands (band 1 and band 3) from our tower of interest. This gives us a good opportunity to use one data set to calibrate a model and then use the second set to see if model prediction performance remains consistent across frequency. The frequencies of the two bands do make model selection more limited as band 1 (~2.1GHz) sits above the threshold for Okumura-Hata, its extension COST-231 and Ericsson 9999.

For the data itself we are looking at a small section of coverage near the tower surrounded by large snow-covered trees in undulating terrain. The collection was performed on a ski track under the trees which was often covered by a tree canopy.

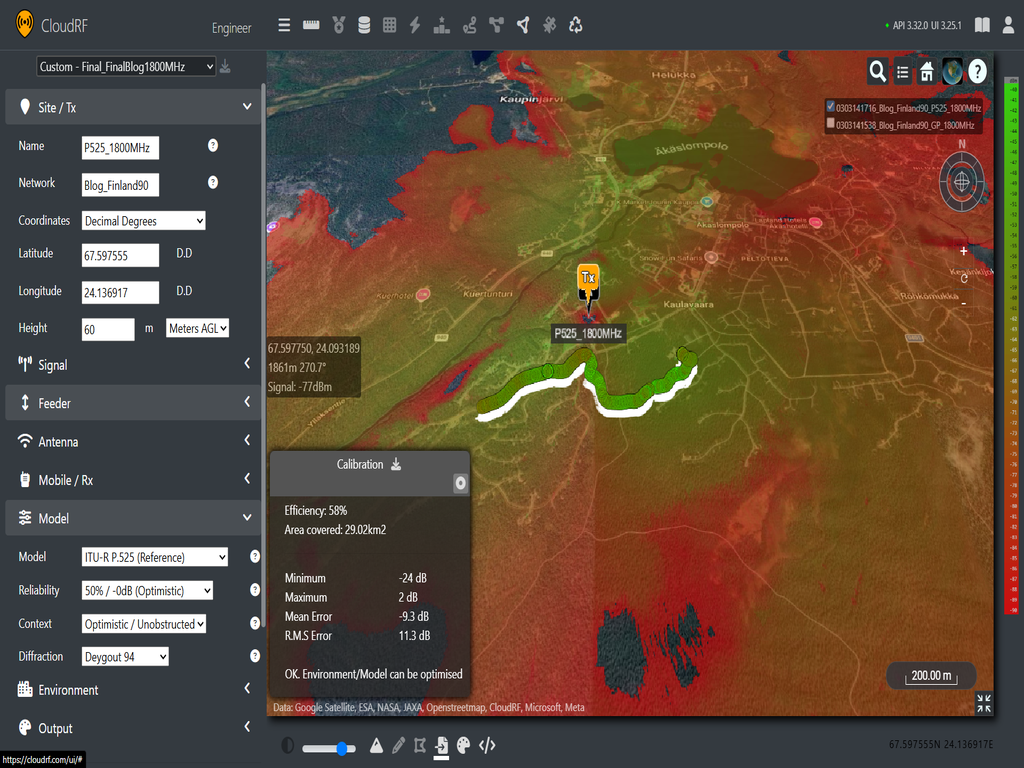

The signal RSSI was calculated as 30dB above measured RSRP using the known 20MHz bandwidth and the data fed into our calibration tool to plot the points. From the app’s data, we know the LTE bands for each of our data sets, so we have a centre frequency and bandwidth. Using the photograph of the mast we can approximate its height at 60m. With the mast location set, we can then make two sets of predictions for both 1820 MHz and 2140 MHz down links and compare model performance across both. We will use P.525 as our free space reference model.

1820 MHz

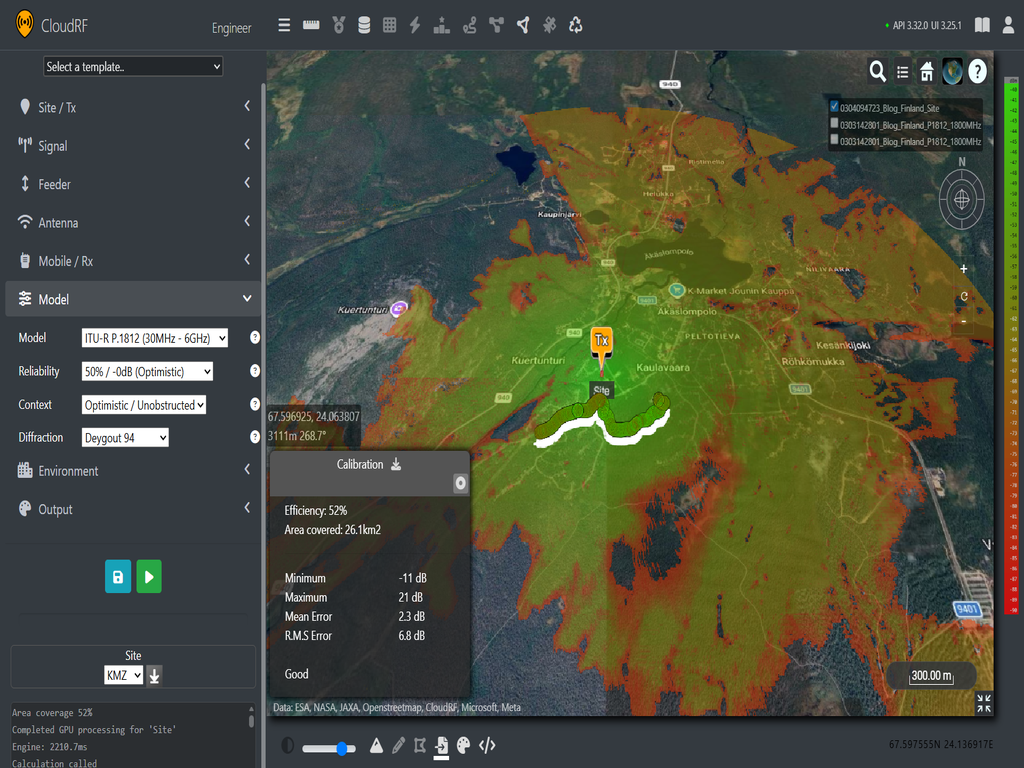

- ITU-R P.1812 (Mean: 2.3 dB, RMS: 6.8 dB)

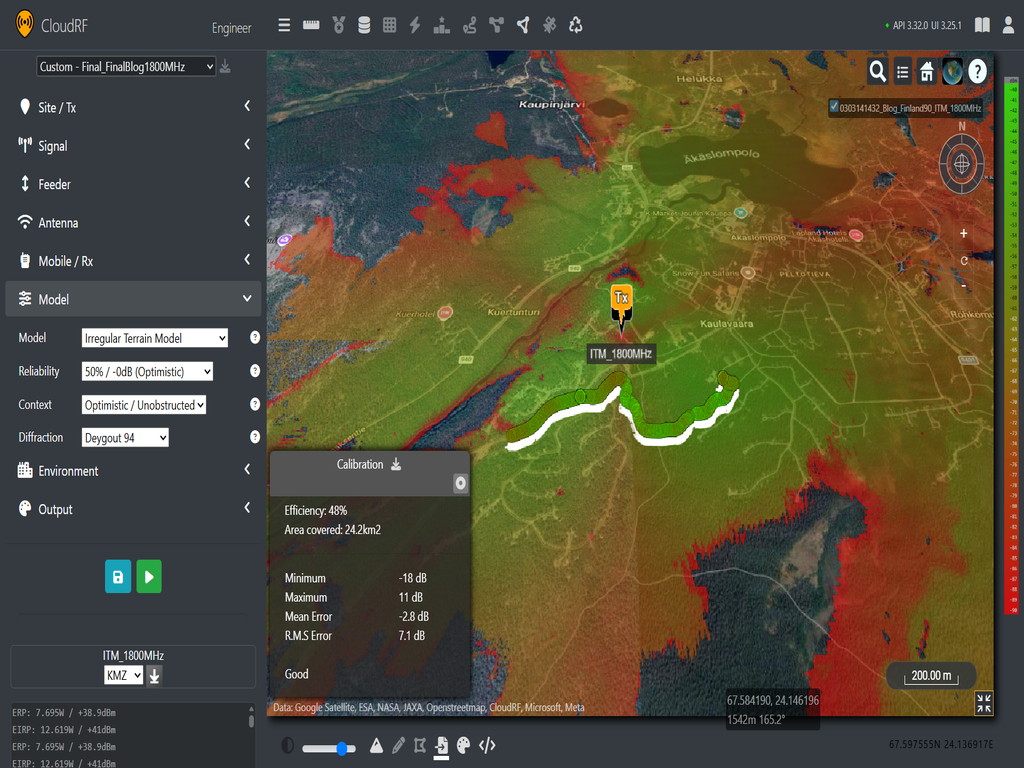

- Irregular Terrain Model (Mean: -2.8 dB, RMS: 7.1 dB)

- ITU-R P.525 (Mean: -9.3 dB, RMS: 11.3 dB)

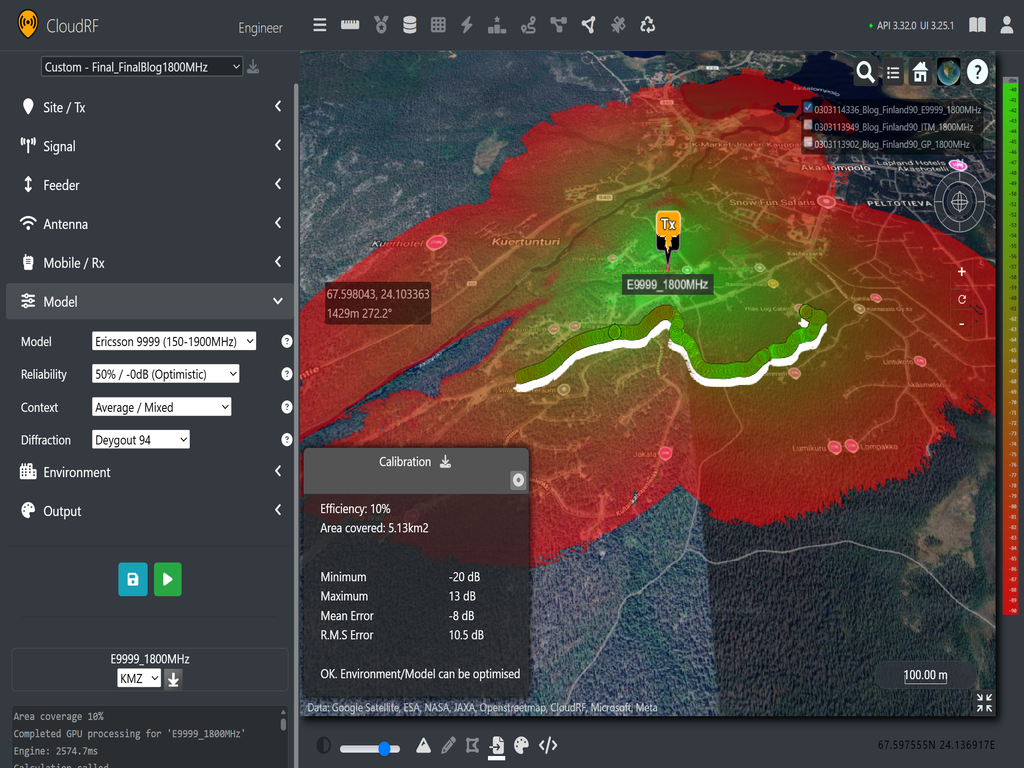

- Ericsson 9999 (Mean: -8 dB, RMS: 10.5 dB)

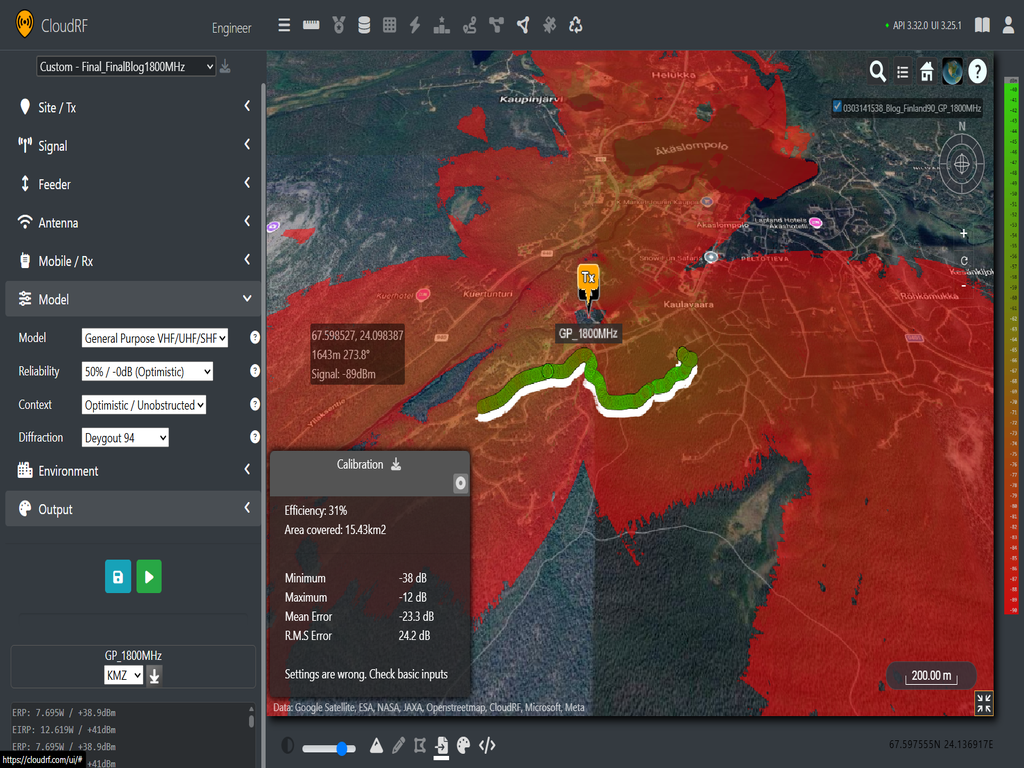

- General Purpose (Mean: -23.3 dB, RMS: 24.2 dB)

- COST-231 (Mean: -43.9 dB, RMS: 48 dB)

When comparing the predictions to the 1820 MHz data sets, we can see that P.1812 and ITM are close predictors of the measured values. Additionally, when Ericsson 9999 is used with an average/suburban context, it gives an okay estimate, but has a much smaller coverage area overall, suggesting that more tuning is required to better match the attenuation caused by the large snow-covered trees. General Purpose is over attenuated which was not surprising given our free space path loss is a close fit and the 20 dB offset was added following tests with ground based tactical radio networks, not 60m masts. COST-231 is unusable which was expected given it is well outside it’s intended environment.

To test consistency, we can now look at the test results at 2140 MHz. Unfortunately, we can’t include Ericsson 9999 or COST-231 as the operating frequency is too high. However, we can test the Stanford University Interim (SUI) model which is rated for above 1.9 GHz.

2140 MHz

- ITU-R P.1812 (Mean: -5 dB, RMS: 9.2 dB)

- Irregular Terrain Model (Mean: -5.1 dB, RMS: 9.6 dB)

- ITU-R P.525 (Mean: -9.4 dB, RMS: 12 dB)

- General Purpose (Mean: -23.4 dB, RMS: 24.6 dB)

- SUI (Mean: -69.3 dB, RMS: 72.9 dB)

From this comparison, we can again see similar results. ITU-R P.1812 is again providing the best prediction, followed closely by ITM. The observation for P.525 and General Purpose remains the same. The SUI model is heavily over attenuating using an unobstructed context. This is not surprising when looking at the generic path loss graphs shown previously shown. SUI has consistently been the most conservative microwave model in our collection and based on the performance in comparison with other models it will be retired from our API in due course.

LTE conclusion

Looking at the two set of predictions, we can see consistency in performance from both P.1812 and ITM with P.1812 giving the best fit. Their coverage maps are generally consistent shapes with themselves and each other and we see more attenuation through the trees at our higher frequency as expected.

Our two models are showing their utility by giving accurate predictions despite heavy snow based on terrain and clutter data alone. The next question for these two models now is how to tune the clutter for each frequency for a better match.

Key findings for choosing a Propagation Model

Having conducted tests across six locations with different datasets and frequencies, we’ve gained insights into how each propagation model performs. The results of those tests have been broadly consistent with deterministic models like ITU-R P.1812 and its legacy predecessor ITM being consistently accurate before calibration and clutter tuning.

The old empirical models can be accurate, but they require the correct context to make an accurate prediction and without test data, it is difficult to tune them to their respective environment due to their fixed path loss curves. This is why we are recommending ITU-R P.1812 as our default model for VHF, LoRa and LTE propagation when using Cloud RF. You can still use Empirical models, but you’ll have to commit to collecting field data for tuning.

To further improve accuracy, users can tune our clutter profiles with variables such as tree heights or average attenuation through buildings. To understand where these values come from, please check out our past model and clutter improvements blogs or if you want to accelerate the process, see our calibration with machine learning demo with sample code on our Github.

What about Machine Learning?

The promise of Machine Learning models to improve accuracy (and speed) is tempting but it depends upon an enormous quantity of accurate training data. In our experience, ML researchers struggle to generate the vast quantity of accurate and expensive test data needed so develop even small demos.

Given enough training data, an ML model could be quicker and just as accurate as physics based simulation or potentially a drive survey.

However, it is naive to criticise the performance of physics based simulation in favour of ML as the model generation relies upon the former to train their model which creates a dichotomy whereby ML developers need to both criticise and rely upon simulation tools to develop an accurate model, and secure funding. There is a solution to this which requires academic honesty and a mature and scalable API but one of those requirements is harder to come by than the other.

Further Reading

Fast simulation calibration with Machine Learning